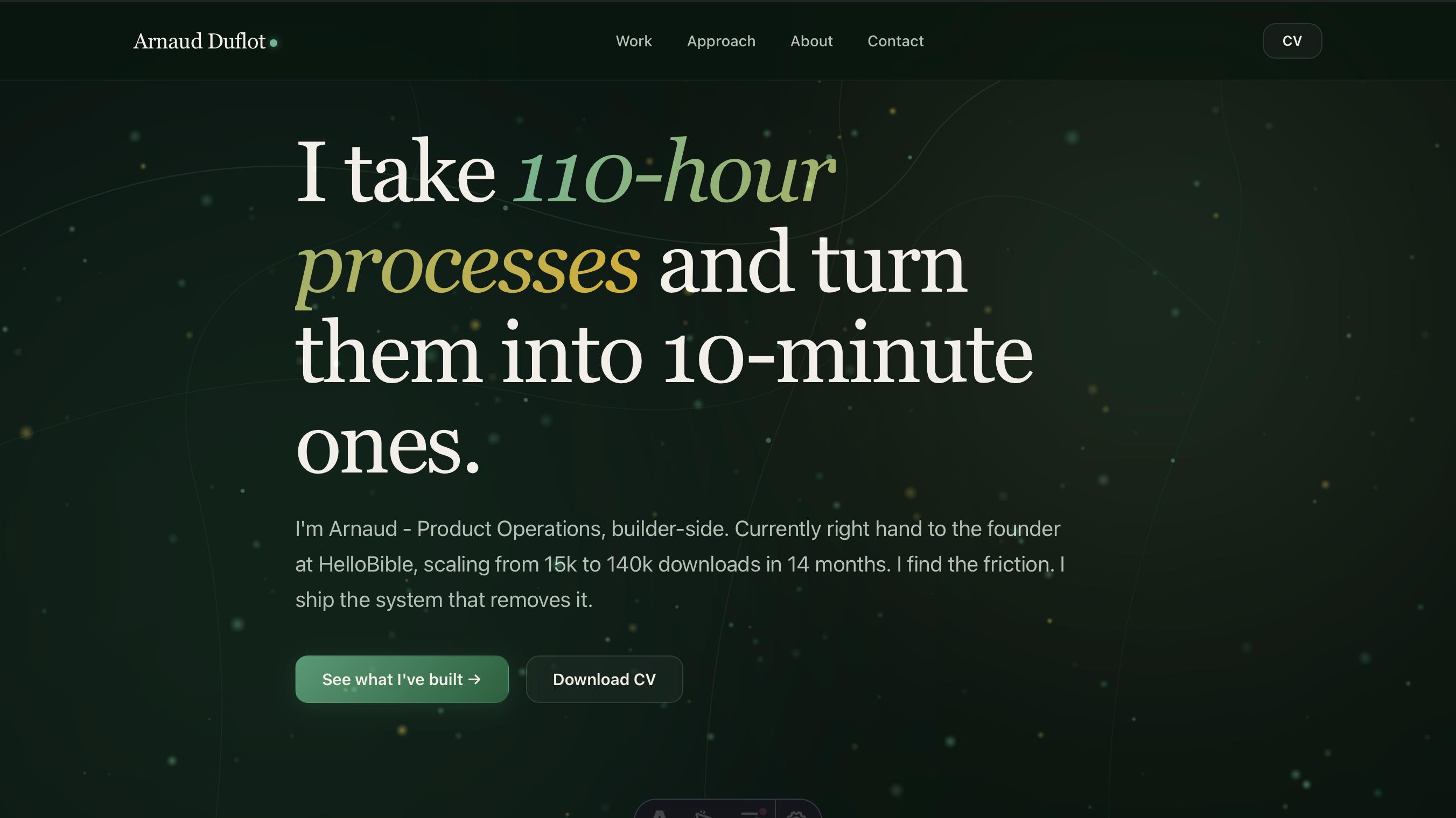

I Shipped This Site Without Writing Code

The strongest demo of my method is the site you're on. I'm not a dev. I orchestrated Claude through every line of code, every visual decision, every trade-off. Here's how, and why it matters.

Why this case study matters more than the others

Every other case study in this portfolio shows me solving an ops problem at HelloBible with AI - user acquisition pipelines, voice of customer extraction, premium user identification, support automation. They prove I do this at work.

This one proves something more useful for the person reading: I can pilot AI to ship things I couldn’t ship alone. Not just connect Zapier nodes. Build a bioluminescent forest in WebGL with adaptive performance tiers. Hire someone like me in 2026 and you don’t add headcount - you multiply what your team can execute.

The site you’re on right now is the proof. I didn’t write the code. Claude did. I made every decision.

The constraint

I’m a Product Ops, not a developer. I’ve never built a website. I can read code well enough to know if it’s doing what I asked, but I can’t write a React component from scratch.

I had three options:

- Pay an agency (5-10k€, 6 weeks, generic template behind a stripped-down CMS)

- Use Webflow (faster but every other portfolio looks the same)

- Build something custom with Claude as my dev

Option 3 wasn’t safer. It was harder. But it was the only one that doubled as a case study.

What I actually did

The method is the same as every operations system I build at HelloBible: decompose, decide, ship, iterate, document.

Decompose: I listed every dimension a portfolio site has - stack, hosting, design, performance, SEO, content management, analytics, accessibility, animations, mobile behavior. Eleven dimensions in total.

Decide: For each dimension, I had a real conversation with Claude about trade-offs. Astro vs Next.js vs vanilla. Static vs server-rendered. Dark mode vs light. Fraunces vs Söhne. I rejected Claude’s suggestions when they didn’t fit (he wanted to add a sitemap integration that was incompatible with our Astro version - I caught it, we removed it).

Ship: Claude wrote the code. I read every diff before validating. When something looked off, I asked why. When I didn’t understand a decision, I made him explain it.

Iterate: When the WebGL particles felt too sparse, I said so. When the mouse trail felt redundant after we added hover morphing, I asked Claude what he thought - and we removed it. When the LinkedIn banner he generated wasn’t wow enough, I made him explain why, and we redid it three times.

Document: This case study is that documentation.

What I’m actually selling

When you hire a Product Ops in 2026, the relevant question isn’t “what can this person ship with their own hands?” It’s “what can this person ship by piloting AI agents?”

For me, those are two very different answers. This site is one data point. The other four case studies in the portfolio are four more.

The technical details, if you want them

The stack

- Astro 4 - static site generator, zero JavaScript by default, MDX for content

- WebGL - tier 3 particle system using GPU acceleration with additive blending

- Canvas 2D - fallback for mobile and devices without WebGL support

- SVG - animated filaments with mouse-reactive control points

- Netlify - CDN hosting, automatic deploys from GitHub

The visual system - built in 6 layers

Layer 1 - Atmosphere: CSS radial gradients, fixed position, animated drift. Zero JavaScript.

Layer 2 - SVG filaments: Five paths that draw themselves on load via stroke-dashoffset animation. Pure CSS.

Layer 3 - Particle system: Canvas 2D for mobile, WebGL for desktop. Mycélium connections (lines drawn when particle distance < 160px) on tier 2+.

Layer 4 - Mouse-reactive filaments: SVG control points recalculate every frame based on cursor position. Smooth interpolation (0.045 factor).

Layer 5 - WebGL particles: 280 particles with additive blending. Fragment shader handles glow. Depth of field via Z dimension.

Layer 6 - Interactivity: Scroll reaction (cap at 40px/frame to avoid anchor-jump explosions), hover morphing (particles within 260px of buttons drift toward them).

Performance tiers

- Mobile: Canvas 2D, 50 ambient particles, no mycélium, no hover morphing

- Desktop: WebGL direct, fallback Canvas 2D if WebGL unavailable

Things that went wrong

@astrojs/sitemaprequires Astro 6 - had to remove it entirely- MDX parses

<3 secondsas a JSX tag - broke two case studies until fixed {variable}in MDX content is parsed as JSX expression - same problem- LinkedIn rejects SVG OG images - had to install

rsvg-convertfor PNG export

Want to talk about something like this?

Email me, send a LinkedIn message, or download the CV. Conversations are what this site is built for.